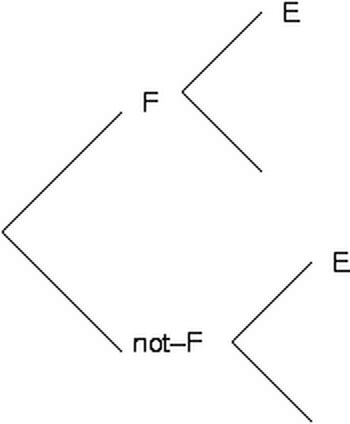

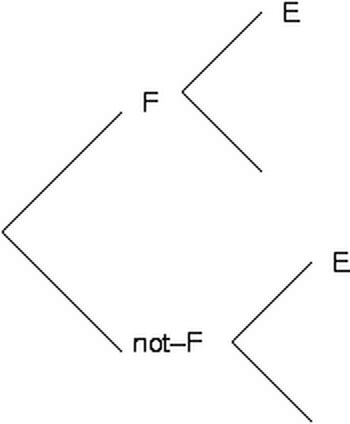

Conditioned assessment (Kleinmuntz et al., 1996)

|

Estimate the probability of some event E.

Estimate the probability of E given some other event F, p(E|F) Estimate p(E|not-F) Estimate p(F) Compute E' as p(F)×p(E|F) + p(not-F)×p(E|not-F) |  |

What is the probability that Penn will abolish football (as it has boxing) within 5 years?

What was the probability of a health-reform bill in spring 2009?

What is the probability that the Republicans will have a majority in the House after the 2012 election? the Senate?

Other examples:

Intrade

Iowa Electronic Markets

NWS

National Hurricane Center

What is probability?

A numerical measure of the strength of a

belief in a certain proposition: p(proposition).

Theories: frequency, logical, personal.

Rules of coherence: addition, multiplication, conditional probability, independence.

The proportion of times it might have happened in the past that it actually did, e.g., p("I get run over crossing 38th street after class") =

(Number of times people got run over crossing 38th st.)

-------------------------------------------------------

(Number of times people crossed 38th st.)

But why this denominator? why the numerator?

But how often can we apply this in the real world?

(not A is called the "complement" of A)

| rain | not rain |

I.e., if one of the propositions is true, that "excludes" the possibility of the other being true: the two propositions "mutually exclude" each other.

When propositions A and B are mutually exclusive: p(A or B) = p(A) + p(B)

e.g. p(yes) = p(female-yes or male-yes) = p(male-yes) + p(female-yes)

|

For example, the expected value of "$10 if a coin comes up heads" is $5.

What is the EV of "$10 if a coin comes up heads twice (in 2 flips)"?

EV is (roughly) the average value if the bet were repeated.

The value of a bet on one event should not change when you break it into two events.

For example, the expected value of (and willingness to pay for)

$10 if "Red card"

should be the same as:

$10 if "Heart"

and

$10 if "Diamond"

Suppose you are willing to pay or accept $4 for $10 if "Red card"

$2.50 for $10 if "Heart"

$2.50 for $10 if "Diamond"

You have a ticket for the first bet. I buy it from you for $4. You are now ahead by $4.

Suppose you are willing to pay or accept $4 for $10 if "Red card"

$2.50 for $10 if "Heart"

$2.50 for $10 if "Diamond"

You have a ticket for the first bet. I buy it from you for $4. You are now ahead by $4.

Then I sell you tickets for the second and third bets for $2.50 each. You are now behind by $1.

Suppose you are willing to pay or accept $4 for $10 if "Red card"

$2.50 for $10 if "Heart"

$2.50 for $10 if "Diamond"

You have a ticket for the first bet. I buy it from you for $4. You are now ahead by $4.

Then I sell you tickets for the second and third bets for $2.50 each. You are now behind by $1.

Then I point out that the second and third bets together are the same as the first bet, so you are willing to trade those two tickets for a ticket for the first bet. You are still behind by $1, and we start over....

The conditional probability of proposition A given proposition B is the probability that we would assign to A if we knew that B were true, that is, the probability of A conditional on B being true. We write p(A|B) or p(A/B). This does not mean "divided by".

For example, what is the probability that Obama wins if Perry is the nominee?

The Conditional Probability Rule is: p(A | B) = p(A and B) / p(B)

(This time the / does mean "divided by".)

In other words: p(A and B) / p(B) = p(A | B)

or:

p (A and B) = p(A | B) × p(B), the multiplication rule.

p (Obama-win and Perry-nom) = p(Obama-win | Perry-nom) × p(Perry-nom)

|

Estimate the probability of some event E.

Estimate the probability of E given some other event F, p(E|F) Estimate p(E|not-F) Estimate p(F) Compute E' as p(F)×p(E|F) + p(not-F)×p(E|not-F) |  |

Are "It is raining" and "Melissa is mowing the lawn" independent?

Are "The first car to pass us will be a Dodge" and "The second car to pass us will be a BMW" independent?

When A and B are independent, then the multiplication rule can be simplified, because p(A | B) = p(A). Hence: p(A and B) = p(A) × p(B).

p(not-H & D) = p(D | not-H) × p(not-H)

p(H & D) = p(D | H) × p(H)

Thus: p(H | D) = p(H & D) / [ p(not-H & D) + p(H & D)] =

p(D|H)×p(H) ----------------------------------------------- [ p(D|H)×p(H) + p(D|not-H)×p(not-H) ]

(1) p(H | D) = p(H and D) / p(D)

But we don't know p(H and D) or p(D). Let's try to use what we do know to find out what they are. The Multiplication Rule is:

(2) p(D and H) = p(D | H) × p(H)

We can change (D and H) to (H and D), since they mean the same thing:

(3) p(H and D) = p(D | H) × p(H)

So now we can work out p(H and D) from two things we know: p(D | H) and p(H). If we use (3) to change (1), we can get a form of Bayes's theorem:

(4) p(H | D) = p(D | H) × p(H) / p(D)

Now we just need to get p(D). We can use the fact that D must happen either with H or with not-H:

(5) p(D) = p(D and H) + p(D and not-H)

Then we can use the Multiplication Rule again to replace the "and" terms:

(6) p(D) = p(D | H) × p(H) + p(D | not-H) × p(not-H)

By replacing "p(D)" in (4) with our expression from (6) we get:

(7)

p(D|H)×p(H)

p(H|D) = ---------------------------------------------

[ p(D|H)×p(H) + p(D|not-H)×p(not-H) ]

(we have 7)

p(D|H)×p(H)

p(H|D) = ---------------------------------------------

[ p(D|H)×p(H) + p(D|not-H)×p(not-H) ]

So the doctor could use that formula to figure out whether a mammogram would be worthwhile. Putting in the numbers

.9×.1 .09 .09

p(H | D) = --------------- = ------- = --- = .33

[.9×.1 + .2×.9] .09+.18 .27

compute p(y) from p(y|m), p(y|f), and p(m):

p(y) = p(y|m)×p(m) + p(y|f)×(1-p(m))

which is the same as p(y&m) + p(y&f).

Now suppose we want to comput p(m|y). By Bayes,

p(m|y) = p(m&y) / [p(m&y) + p(f&y)]

or p(m|y) = p(m&y) / p(y).

In other words, conditional assessment gives us the denominator of Bayes's theorem, for this calculation.

p(D | H) × p(H)

p(H | D) = ---------------------------------------------

[ p(D | H) × p(H) + p(D | not-H) × p(not-H) ]

p(D | not-H) × p(not-H)

p(not-H | D) = ---------------------------------------------

[ p(D | H) × p(H) + p(D | not-H) × p(not-H) ]

p(H | D) p(D | H) × p(H) p(D | H) p(H)

------------ = ----------------------- = ------------ × --------

p(not-H | D) p(D | not-H) × p(not-H) p(D | not-H) p(not-H)

posterior odds = diagnostic ratio × prior odds

log(posterior odds) = log(diagnostic ratio) + log(prior odds)